What? Isn’t that contradictory? Isn’t black & white is about the absence of color? I wanted to follow up on a previous article on how we get color information in our digital cameras with a nod to the purity of black and white and emphasize how it is still dependent on color.

Remove the color filter?

I indicated before that our sensors are panchromatic – they respond to the full range of visible light. If we want black & white images, shouldn’t we just take the color filter array off and let each photo site respond to just the grey values?

We could, but most black & white photographers would not be happy with the results. It would be like shooting black & white film. A problem with black and white film is that it eliminates all the information that comes from color. Through interpolation of the Bayer data, we get full data for red, green and blue at each pixel position. If we removed the filter array, we would have only luminosity data. So before even starting, we would be throwing away 2/3 of the data available in our image.

At that point we would have to resort to placing colored filters over the lens, like black & white shooters of old had to do. They did this to “push” the tonal separation in certain directions for the results they wanted. But this filter is global. It affects the whole image rather than being able to do it selectively as we can with digital processing. And it is an irreversible decision we would have to make while we were shooting. Why go backward?

What makes a good b&w image?

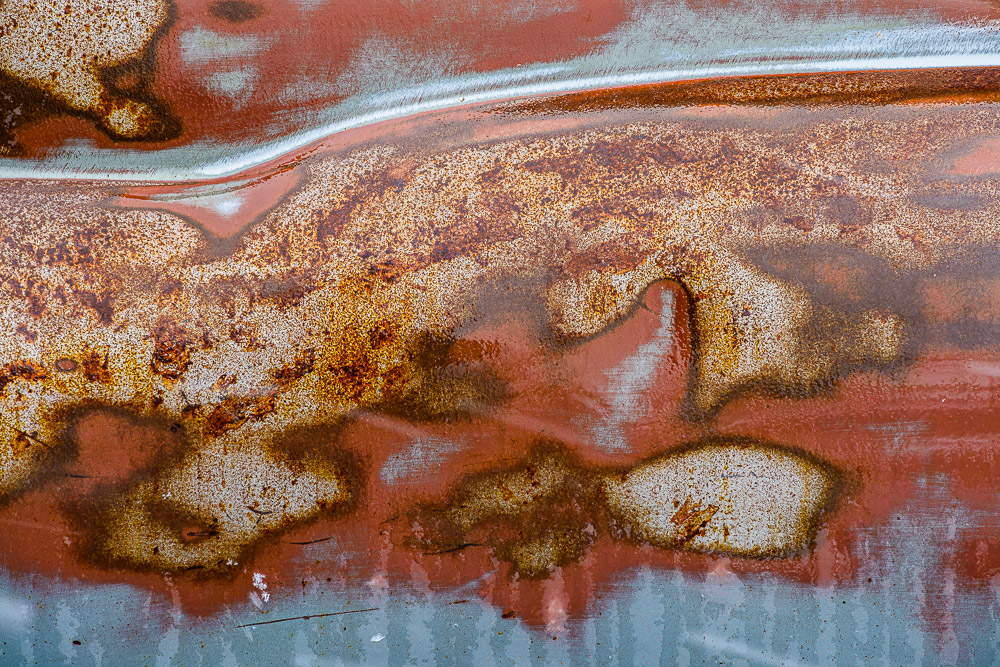

Black & white images are a very large and important sub-genre of photography. The styles and results cover a huge range. But I will generalize and say that typically the artists want to achieve a full range of black to white tones in each image with good separation. Think Ansel Adams prints.

Tones refer to the shades of grey in the resulting print. We do a lot of work to selectively control how these tones relate to each other. Typically we want rich black with a little detail preserved in them, bright whites, also containing a little detail, and a full range of distinct tones in between. These mid-range tones give us all the detail and shading.

Tone separation

If one of the goals of black & white photographers is to have high control of the tones, how do we do that? Typically by using the color information. I mentioned putting colored filters over the lens. This was the “way back” solution.

Landscape photographers like Ansel Adams often used a dark red filter to help get the deep toned skies they were known for. Red blocks blue light, forcing all the blue tones toward black.

Digital processing gives us far more control and selectivity than the film photographers had. We don’t have to put the filter over the whole lens and try to envision what the result will be. We can wait and do it on our computer where we have more control, immediate previews, and undo. But all this control would be impossible without having a full color image to work with. As a matter of fact, modern b&w processing starts by working on the color image. Initial tone and range corrections are done in color. Good color makes good b&w.

B&W conversion

Obviously, at some point the color image has to be “mapped” to b&w. This is called b&w conversion. It can be a complicated process. There are many ways to go about the conversion, and each artist has their own favorites. There is no one size fits all.

It is possible to just desaturate the image. This uses a fairly dumb algorithm to just remove the color. It is fast and easy, but it is usually about the worst way to make a good b&w image.

You could use the channels as a source of the conversion. The RGB colors are composed of red, green and blue channels. These can be viewed and manipulated directly in Photoshop. They can often be useful for isolating certain colors to work on. Isolating the red channel would be like putting a strong red filter over the lens.

Lightroom and Photoshop have built in b&w conversion tools. In LIghtroom, choose the Black & White treatment in the Basic panel of the Develop module. This has an interesting optional set of “treatments” to choose from in the grid control right under it. In Photoshop use the B&W adjustment layer.

Both of these have the power of allowing color-selective adjustments. This is huge. Tonal relationships can be controlled to a much greater degree than was possible with film. If we want to just make what were the yellow colors brighter, we can do that. Of course, Photoshop allows using multiple layers with masking to exert even more control.

There are many other techniques, such as channel mixing or gradient maps or plug-ins like Silver Effects to give different and added control. It is actually an embarrassment of riches. This is a great time to be a b&w photographer.

It starts with color

What is common to all of this, though, is that it starts from the color information. Color is key to making most great black & white images.

I sometimes hear a photographer say “that image doesn’t work well in color, convert it to b&w”. Sometimes that works, but I believe it is a bad attitude. B&w is not a means of salvaging mediocre color images. We should select images with a rich spread of tones, great graphic forms, and good color information allowing pleasing tonal separation. Black & white is its own special medium. Remember, though, usually it requires color to work.