One of the important things every photographer has to learn is to see what the camera sees. It is a different process from painting or other visual art. It is a technical process, not only of how the sensor works but the transform of a 3 dimensional world to a 2 dimensional representation. This is part of our art. We have to understand it and be able to predict the results.

Static image

Unless you are shooting video, the end result of the camera’s capture is a static image. That seems like a “duh” to most of us, but it is significant. The entire image is recorded “in one instant”. Yes, I’m ignoring moving shutter slit, HDR, panoramas, time exposures, and other exceptions that can bend the rules.

This “in one instant” is significant because our eyes work in a totally different way. We can only see a small spot at a time. We continually “scan” around a scene to “see” it all. Our brain stitches all these scans together marvelously to give us the impression of a complete scene. We are not aware of it happening.

What difference does it make? Well, there are subtleties. If something moves in real life, our eyes jump to the movement and study it. Movement has a higher priority in our brains than static things.

Our photograph no longer has that movement or flashing lights. It is a flat and static collection of pixels. We have to learn techniques to stimulate the viewer’s eye in other ways. We learn that the eye is drawn to the brightest or highest contrast areas. That informs how to capture the scene and process it to end up with results that help direct our viewer to the parts of the image we want to emphasize. It helps a lot to anticipate what we are going to want to do. This is part of learning how the camera sees.

Depth of field

The static image we create may or may not seem in sharp focus throughout. This is known as depth of field. It is referring to which parts of the scene are in “acceptable” focus. The aperture setting controls the range of this good focus area.

Remember that the 3 main things controlling the exposure of an image are the aperture, the shutter speed, and the ISO setting. The aperture controls the amount of light coming through the lens at any instant. How long the sensor is exposed to the light is the shutter speed. And the ISO setting is the sensitivity of the sensor to the light. A side effect of the aperture setting is the control of effective depth of field.

In real life we do not see limited depth of field. Our eyes focus on one small area at a time. Each spot we focus on is in sharp focus. The resulting image our brain paints is that everything is in focus. Try it. Look around where you are now. Then close your eyes and try to remember which parts were out of focus. Spoiler – there aren’t any. We remember it all in focus.

So this is a big disconnect between what we perceive of a live scene and what we record in a photograph. Some photographers see this as a problem if they cannot keep the entire scene in sharp focus. But intentionally making non-subject areas blurry can also be used for artistic effect. Since this is different from how our eyes see, this creates something that stands out. It can change our perception.

But like it or not, it is something that the camera sees differently and we need to learn how to handle it.

Shutter speed

To our eyes, things seem to be either frozen sharply or “just a blur” moving by. Things are usually only perceived as a blur if we are not paying attention to them.

But for the camera, the shutter is open for a certain amount of time and things are either sharp if they are still or blurred if they are moving. The camera does not understand the scene and it is not smart enough to know what should be sharp.

Let me give an example. Say you are standing beside a road watching a car go by. If we care about the car (wow, a new ______; that would be fun to drive) we are paying attention to it and we perceive it as sharp. To the camera, it is just something moving through the frame while the shutter is open. It has no name or value. The photographer has to determine how to treat this motion. What it should “mean”.

So the photographer may pan with the car to make it appear sharp while the rest of the image is blurred. Or the intent might be for the car to be a blurred streak in the frame. Either way, it is a design decision to be made because the camera records movement differently from us.

The lens

Unlike us, our cameras let us swap out a variety of “eyeballs” – the lens. We have a certain fixed field of view. That is why camera formats have a particular focal length designated as the “normal” lens. For a full frame 35mm camera like I use, the “normal” lens is in the range of 45-50mm, because for this size sensor this corresponds to the field of view we typically see.

But most of our cameras are not limited to that. We can use very wide angle lenses to take in a larger sweep of scene. Or we can use a telephoto lens to bring distant subjects close or to restrict our view to a narrow slice. Or we can use macro lenses to magnify small objects. All these things give us a new perspective on the world that would not be possible with our regular eyes. This is another way the camera sees that we need to learn to use.

Mapping to 2D

The world is 3D. Pictures are 2D. It seems obvious. Yet we must be aware of the transformation that is happening.

In the 3D space we move in, we are acutely aware of depth and movement in many axes – length, width, height, pitch, roll, yaw, and others. We use this information automatically to interpret the world. But it is lost when the scene is captured on our 2D sensor.

We sense depth. “In front of” or “behind” come automatically to us. Our camera is not as smart. The camera sensor records everything in front of it as a flat, static image. The scene is mapped through the particular perspective of the lens being used and onto the flat sensor.

An example to illustrate. This is a classic. You take a picture of your family downtown. The scene looks perfectly fine and normal to you, because you intuitively realize the depth and separation of things. It gives you selective attention. But when you look at the picture there is a very objectionable telephone pole poking out of Uncle Bob’s head. You did not pay attention to that at the time because you “knew” the pole was far behind him and you dismissed it. The camera doesn’t know to ignore it. All pixels are equal.

Light

This is fundamental to our cameras. There has to be a light source. The camera sees only light from a source or light that is reflected or transmitted by objects. But being humans, we interpret the real world as objects. They are “there”. They have mass and form and value and color. Not so to the camera. It doesn’t ascribe meaning to a scene. All a camera can record is light. Our fancy sensor doesn’t see a red ball. It detects, but doesn’t care, that there is a preponderance of light in the red band being recorded.

By its very definition – photo-graphy means writing with light – photography is dependent on light. Our modern sensors are marvelous products. We can shoot at very high ISO and make exposures in almost total darkness. But if any image was recorded, there was some actual light available.

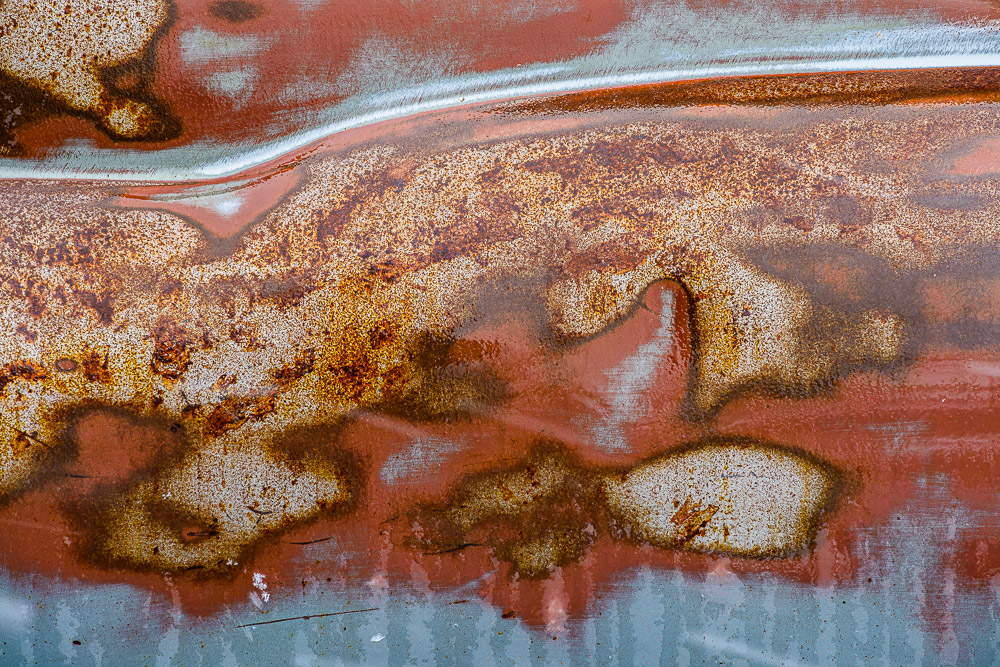

Everything in every image we make is a record of light. More than almost any other art form, photography is dependent on light. Photographers must be intensely sensitive to the direction and quality and color of the light sources that are illuminating our scene. Likewise we must be very aware of the objects the light is falling on, their shape and texture and reflectivity and color.

Learning to see, again

Art in general, and photography in particular, is a lifelong learning. We learn to see creatively. We learn to see compositions and design. And we have to learn to see the way the camera sees. This is the way we capture the image we want.

Note, after writing this I found this good article by David duChemin. He is a great writer.