I have written about image sharpness before, but I was challenged by a new viewpoint recently. An author I respect made an assertion that gave me pause. He was describing that when you enlarge film it is an optical scaling but digital enlarging requires modifying the information. Implying that modifying information was bad. So I was wondering, is digital scaling bad?

Edges and detail

Let me get two things out of the way. When we are discussing scaling we only mean upscaling, that is, enlarging an image. Shrinking or reducing an image size is not a problem for either film or digital.

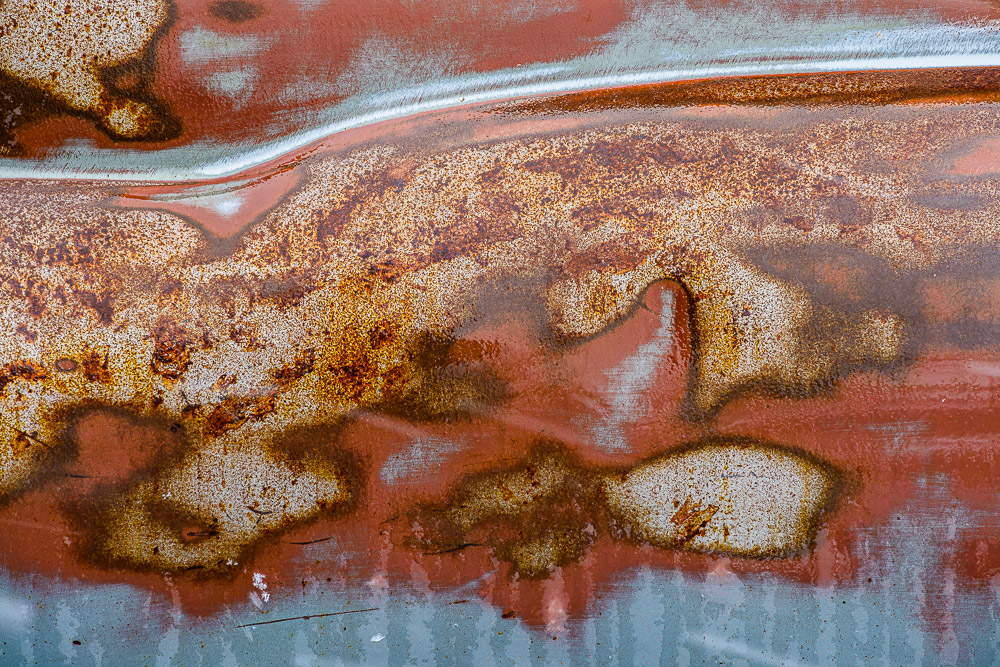

The other thing is that the problems from upscaling mostly are edges or fine detailed areas. An edge is a transition from light to dark or dark to light. The more resolution the medium has to keep the abruptness of the transition, the more it looks sharp to us. Areas with gradual tone transitions, like clouds, can be enlarged a lot with little degradation.

Optical scaling

As Mr. Freeman points out, enlarging prints from film relies on optical scaling. An enlarger (big camera, used backward) projects the negative on to print paper on a platen. Lenses and height extensions are used to enlarge the projected image to the desired size.

This is the classic darkroom process that was used for well over 100 years. It still is used by some. It is well proven.

But is is ideal? The optical zooming process enlarges everything. Edges become stretched and blurred, noise is magnified. It is a near exact magnified image of the original piece of film. Unless it is a contact print of an 8×10 inch or larger negative, it has lost resolution. Walk up close to it and it looks blurry and grainy.

Digital scaling

Digital scaling is generally a very different process. Scaling of digital images is usually an intelligent process that does not just multiply the size of everything. It is based on algorithms that look at the spatial frequency of the information – the amount of edges and detail – and scales to preserve that detail.

For instance, one of the common tools for enlarging images is Photoshop. The Image Size dialog is where this is done. When resample is checked, there are 7 choices of scaling algorithms besides the default “Automatic”. I only use Automatic. From what i can figure out it analyzes the image and decides which of the scaling algorithms is optimal. It works very well.

All of these operations modify the original pixels. That is common when working with digital images and it is desirable. As a matter of fact, it is one of the advantages of digital. A non-destructive workflow should be followed to allow re-editing later.

Scaling is normally done as a last step before printing. The file is customized to the final image size, type of print surface, and printer and paper characteristics. So it is typical to do this on a copy of the edited original. In this way the original file is not modified for a particular print size choice.

Sharpening

In digital imaging, it is hard to talk about scaling without talking about sharpening. They go together. The original digital image you load into Lightroom (or whatever you use) looks pretty dull. All of the captured data is there, but it doesn’t look like what we remembered, or want. It is similar to the need for extensive darkroom work to print black & white negatives.

One of the processes in digital photography in general, and after scaling in particular, is sharpening. There are different kinds and degrees of sharpening and several places in the workflow where it is usually applied. It is too complex a subject to talk about here.

But sharpening deals mainly with the contrast around edges. An edge is a sharp increase in contrast. The algorithms increase the contrast where an edge is detected.

This changes the pixels. It’s not like painting out somebody you don’t want in the frame, but it is a change.

By the way, one of the standard sharpening techniques is called Unsharp Mask. It is mind-bending, because it is a way of sharpening an image by blurring it. Non-intuitive. But the point here is this is digital mimicry of a well known technique used by film printers. So the old film masters used the same type of processing tricks to achieve the results they wanted. They even spotted and retouched their negatives.

Modifying pixels

Let me briefly hit on what I think is the basic stumbling block at the bottom of this. Some people have it in their head that there is something wrong or non-artistic about modifying pixels. That is a straw man. It’s as silly as saying you’re not a good oil painter if you mix your colors, since they are no longer the pure colors that came out of the tubes. I have mentioned before that great prints of film images are often very different from the original frame. Does that make them less than genuine?

Art is about achieving the result you want to present to your viewers. How you get there shouldn’t matter much, and any argument of “purity” is strictly a figment of the objector’s imagination.

One of the great benefits of digital imaging is the incredible malleability of the digital data. It can be processed in ways the film masters could only dream of. We as artists need to use this capability to achieve our vision and bring our creativity to the end product.

I am glad I live in an era of digital imaging. I freely modify pixels in any way that seems appropriate to me.