Have you ever considered that that great sensor in your camera only sees in black & white? How, then, do we get color images? It turns out that there is some very interesting and complicated Engineering involved behind the scenes. I will try to give an idea of it without getting too technical.

Sensor

I have discussed digital camera sensors before. They are marvelous, unbelievably complicated and sophisticated chips. But they are, still, a passive collector of photons (light) that falls on them.

An individual imaging site is a small area that collects light and turns it into an electrical signal that can be read and stored. The sensor packs an unimaginable number of these sites into a chip. A “full frame” sensor has an imaging area of 24mm x 36mm, approximately 1 inch by 1.5 inch. My sensor divides that area into 47 million image sites, or pixels. It is called “full frame” because that was the historical size of a 35mm film frame.

But, and this is what most of us miss, the sensor is color blind. It receives and records all frequencies in the visible range. In the film days it would be called panchromatic. That is just a fancy word to say it records in black & white all the tones we typically see across all the colors.

This would be awesome if we only shot black & white. Most of us would reject that.

Need to introduce selective color

So to be able to give us color, the sensor needs to be able to selectively respond to the color ranges we perceive. This is typically Red, Green, and Blue, since these are “primary” colors that can be mixed to create the whole range.

Several techniques have been proposed and tried. A commercially successful implementation is Sigma’s Foveon design. It basically stacks three sensor chips on top of each other. The layers are designed so that shorter wavelengths (blue) are absorbed by the top layer, medium wavelengths (green) are absorbed by the middle layer, and long wavelengths (red) are absorbed by the bottom layer. A very cleaver idea, but it is expensive to manufacture and has problems with noise.

Perfect color separation could be achieved using three sensors with a large color filter over each. Unfortunately this requires a very complex and precise arrangement of mirrors or prisms to split the incoming light to the three sensors. In the process, it reduces the amount of light hitting each sensor, causing problems with image capture range and noise. It is also very difficult and expensive to manufacture and requires 3 full size sensors. Since the sensor is usually the most expensive component of a camera, this prices it out of competition.

Other things have been tried, such as a spinning color wheel over the sensor. If the exposure is captured in sync with the wheel rotation then 3 images could be exposed in rapid sequence giving the 3 colors. Obviously this imposes a lot of limitations on photographers, since the rotation speed has to match the shutter speed. A real problem for very long or very short exposures or moving subjects.

Bayer filter

Thankfully, a practical solution was developed by Bryce Bayer of Kodak. It was patented in 1976, but the patent has expired and the design is freely used by almost all camera manufacturers.

The brilliance of this was to enable color sensing with a single sensor by placing a color filter array (CFA) over the sensor to make each pixel site respond to only one color. You may have seen pictures of it. Here is a representation of the design:

The gray grid at the bottom represents the sensor. Each cell is a photo site. Directly over the sensor has been placed an array of colored filters. One filter above each photo site. Each filter is either red or green or blue. Note that there are twice as many green filters as either red or blue. This is important.

But wait, we expect that each pixel in our image contains full RGB color information. With this filter array each pixel only sees one color. How does this work?

It works through some brilliant Engineering with a bit of magic sprinkled in. Full color information for each pixel is constructed by interpolating based on the colors of surrounding pixels.

Restore resolution

Some sophisticated calculations have to be done to calculate the color information for each pixel. This makes each pixel end up with full RGB color values. The process is termed “demosaicking” in tech speak.

I promised to keep it simple. Here is a very simple illustration. In the figure below, if we wanted to derive a value of green for the cell in the center, labeled 5, we could average the green values of the surrounding cells. So an estimate of the green value for cell red5 is (green2+green6+green8+green4)/4

This is a very oversimplified description. If you want to get in a little deeper here is an article that talks about some of the considerations without getting too mathematical. Or this one is much deeper but has some good information.

The real world is much more messy. Many special cases have to be accounted for. For instance, sharp edges have to be dealt with specially to avoid color fringing problems. Many other considerations such as balancing the colors complicate the algorithms. It is very sophisticated. The algorithms have been tweaked for over 40 years since Mr. Bayer invented the technique. They are generally very good now.

Thank you, Mr. Bayer. It has proven to be a very useful solution to a difficult problem.

All images interpolated

I want to emphasize a point that basically ALL images are interpolated to reconstruct what we see as the simple RGB data for each pixel. And this interpolation is only one step in the very complicated data transformation pipeline that gets applied to our images “behind the scenes”. This should take away the argument of some of the extreme purists who say they will do nothing in post processing to “damage” the original pixels or to “create” new ones. There really are no original pixels.

I understand your point of view. I used to embrace it, to an extent. But get over it. There is no such thing as “pure” data from your sensor, unless maybe you are using a Foveon-based camera. All images are already interpolated to “create” pixel data before you ever get a chance to even view them in your editor. In addition profiles and lens corrections and other transformations are applied,

Digital imaging is an approximation, an interpretation of the scene the camera was pointed at. The technology has improved to the point that this approximation is quite good. Based on what we have learned, though, we should have a more lenient attitude about post processing the data as much as we feel we need to. It is just data. It is not an image until we say it is, and whatever the data is at that point defines the image.

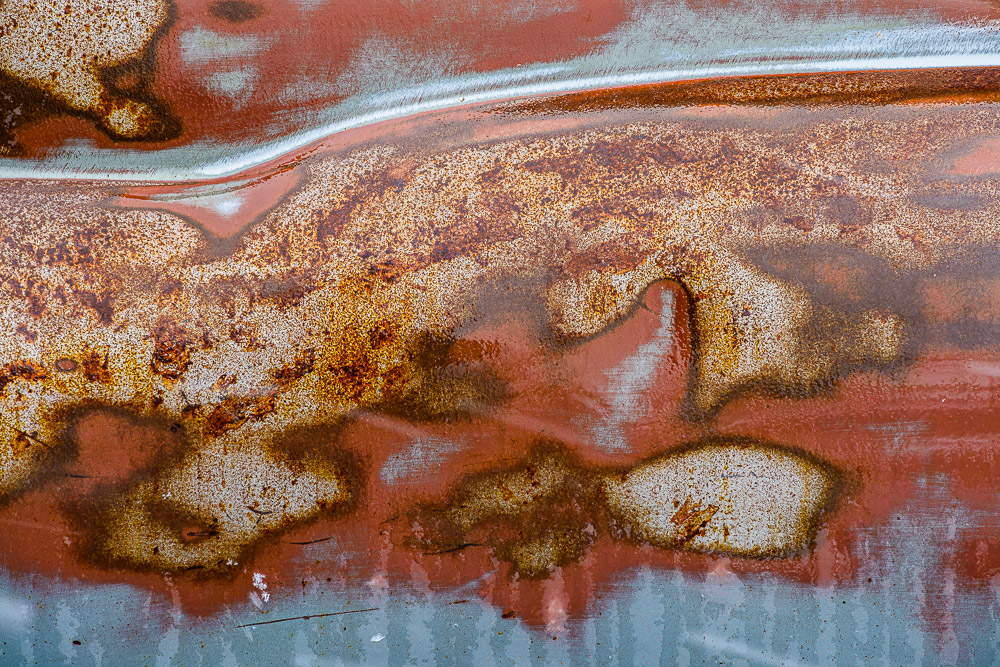

The image

I chose the image at the head of this article to illustrate that the Bayer filter demosaicking and other image processing steps gives us very good results. The image is detailed and with smooth, well defined color variation and good saturation. And this is a 10 year old sensor and technology. Things are even better now. I am happy with our technology and see no reason to not use it to its fullest.

Feedback?

I felt a need to balance the more philosophical, artsy topics I have been publishing with something more grounded in technology. Especially as I have advocated that the craft is as important as the creativity. I am very curious to know if this is useful to you and interesting. Is my description too simplified? Please let me know. If it is useful, please refer your friends to it. I would love to feel that I am doing useful things for people. If you have trouble with the comment section you can email me at ed@schlotzcreate.com.